GSA SER Link Lists

Understanding GSA SER Link Lists

At the core of any automated link building campaign with GSA Search Engine Ranker lies a critical component that often decides success or failure: the quality and structure of the target lists. These collections of URLs, commonly referred to as GSA SER link lists, are essentially the fuel that powers the software. Without a steady supply of high-quality, unspammed platforms where backlinks can be created, even the most sophisticated configurations will yield mediocre results. A link list is not merely a random dump of domains; it is a curated selection of websites compatible with GSA SER’s many engines, ready to accept submissions.

Why Curated GSA SER Link Lists Matter

The internet is littered with publicly scraped, overused lists that can do more harm than good. Relying on low-grade targets often leads to a sky-high rate of failed verifications, wasted server resources, and a backlink profile drowned in spammy neighborhoods. Premium GSA SER link lists are meticulously filtered to ensure a high percentage of live, do-follow, and indexable pages. A well-sorted list saves days of scraping time and dramatically improves the LpM (links per minute) metric, as the software spends less time hitting dead domains and more time building actual backlinks on platforms like WordPress, Joomla, or Article Directory engines.

Contextual vs. Non-Contextual Targets

Not all targets are created equal. A strategic approach splits GSA SER link lists into contextual engines and other platform types. Contextual lists often focus on blog comments, forums, and Web 2.0 platforms where your anchor text sits inside a relevant body of content. These lists carry more weight for Tier 1 and Tier 2 link building. Non-contextual targets, such as guestbook signings, image comments, or cheap social bookmarking platforms, are typically relegated to Tier 3 or mass power-up duties. Separating these into distinct lists allows users to assign different project rules, avoiding the mistake of blasting a money site with aggressive, low-quality engines.

Where to Source Reliable GSA SER Link Lists

Building a fresh, high-converting list from scratch is a time-intensive process that involves advanced search engine scraping, footprint extraction, and automated verification. Many experienced users purchase monthly updated GSA SER link lists from trusted private vendors who use dedicated hardware to identify and clean millions of potential URLs. These verified lists undergo rigorous checks: they remove duplicate domains, delete sites blocked by Akismet or similar spam filters, and confirm platform compatibility. While free lists exist, they are often shared to the point of exhaustion, meaning the webmasters have already deleted the sections on which GSA SER relies.

Self-Scraping with Custom Footprints

For those who prefer a DIY approach, building your own GSA SER link lists demands a solid footprint library. Scraping tools like ScrapeBox or GSA’s own internal scraper can harvest potential targets using footprints such as "powered by wordpress" or "leave a reply". The real magic happens during the filtering stage. A raw scrape must be deduplicated, checked against malware databases, and tested for open comment forms or registration pages. The most successful SEOs run multiple dedicated servers solely to verify targets, purging any link that fails to return a successful test submission, resulting in a lean, mean list ready for high-speed campaigns.

Optimizing Campaigns with Segmented Link Lists

Dumping the same list into every campaign is a recipe for uneven results. Advanced usage of GSA SER link lists involves segmentation based on domain metrics. A list containing high Domain Authority (DA) or Trust Flow targets should be reserved for precious Tier 1 buffers. Medium-quality lists with decent IP diversity are perfect for Tier 2 layers, while massive, low-metric lists are used strictly for indexer injections and raw power. Segmenting by language and country-specific TLDs is another powerful method to geo-target link building and avoid creating a suspicious, globally scattered footprint.

Maintenance and Deletion Cycles

A static list depreciates rapidly. Webmasters delete spammed directories, and platforms change their software, rendering previous footprints obsolete. Professional GSA SER link lists require constant culling. After each campaign run, importing the failed logs back into a filter system helps identify dead entities. Automated maintenance scripts can strip out URLs that fail 404 checks or contain adware triggers. A link list that isn’t cleaned at least bi-weekly will silently kill your success rate, causing GSA SER to cycle through endless timeouts instead of posting live backlinks.

The Link Between List Quality and Indexing

Even the check here best tiered structure falls apart if search engines refuse to crawl the pages hosting your links. The caliber of your GSA SER link lists determines the crawlable environment of your backlinks. Links placed on pages that are already indexed and receiving frequent crawler visits pass equity almost immediately. Conversely, links on abandoned profiles or orphaned pages will never be discovered without excessive pinging and indexing services. This is why premium lists often include pre-checked live URLs with verified Google cache status, ensuring that each placed link has a fighting chance to contribute to rankings instead of evaporating into the void.

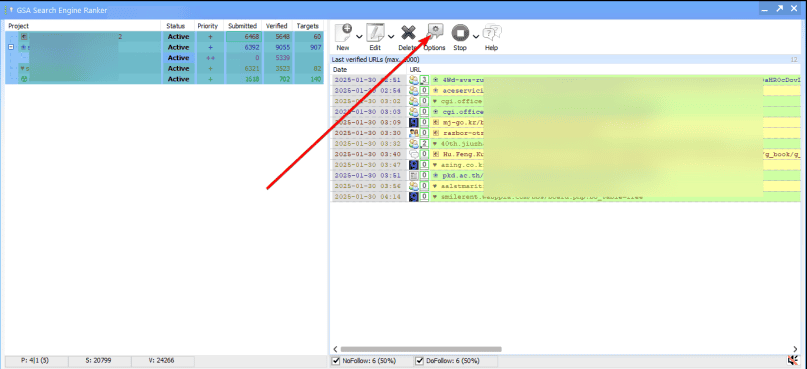

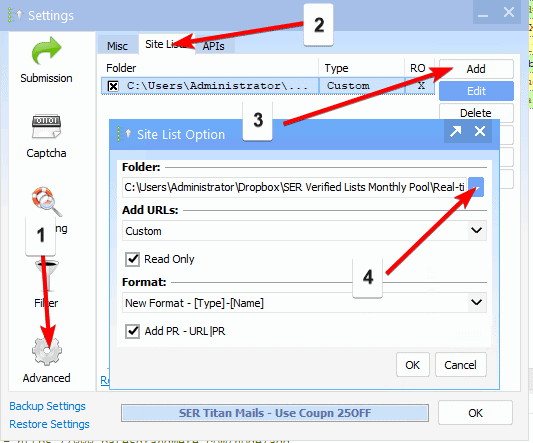

Automating Delivery for Agency Work

For agencies managing dozens of SEO campaigns, manually moving .txt files around is inefficient. Integrating a central repository for GSA SER link lists that syncs with multiple server instances ensures uniformity. Automated scripts can pull freshly verified lists at set intervals, load them into GSA SER via the command-line interface, and reset projects without human interference. This kind of streamlined automation allows a single operator to manage massive link pyramids, pushing fresh targets into the rotation while automatically archiving lists that have burned out, creating a self-sustaining link building ecosystem.